Installing k8s storage with Ondat

In this tutorial we will walk thought installation of persistent storage in kubernetes with OnDat (Storage OS) product.

Fist why Ondat? There are other free alternatives like rook or paid ones like Portworx

My main reason to use OnDat is because of a limitation in the VPS host. It can't provide separate storage disks. That is a requirement in solutions like rook and portworx.

Because of that limitation I almost switch of VPS provider from contabo to hetzner.

Using another provider like hetzner could be a better alternative because it has native integration with its cloud storage system, that way we don't need to worry about it and can be more performatic since communication between host and storage could be in a internal network.

Ondat is a good alternative for on promise cluster, and after some evaluation I decide to stick with it. It has a free license for 1TiB storage in cluster. For now this is plenty for my use case, but it can be expansive if you need more resources.

Take a look at Ondat https://docs.ondat.io/docs/prerequisites/ and https://docs.ondat.io/docs/best-practices/ and judge by yourself if is a good solution for you.

We will use the same master nodes as etcd nodes for OnDat. Your cluster has 3 master, etcd, and worker nodes, this could be a limiting point for a more performatic cluster, for instance you could separate the masters, etcd from the workers, if the cluster grows I think it should be a better solution this separation.

Now lets begin, all steps here are documented in https://docs.ondat.io/docs/prerequisites/ and https://docs.ondat.io/docs/install/

Pre-requisites

First we need the kernel modules loaded, we are using Ubuntu Server 20.04 LTS

For this you could use a ansible playbook for automation, make sure ansible is installed on your system (Linux or WSL Windows, Or Mac)

git clone git@gitlab.com:gcsilva/ondat-playbook.git

cd ondat-playbook

Edit the inventory/hosts.yml file and add your hosts ips or dns names.

Also make sure you can ssh without a password using just ssh keys in the systems. For this follow this tutorial https://phoenixnap.com/kb/ssh-with-key

Now run the playbook

ansible-playbook -i inventories/contabo/ playbooks/ondat.yml

If you get some error of a the role not being found, link the role folder in the playbook folder:

cd playbooks

ln -s ../roles .

Run the playbook again.

Installing ETCD

We will use ansible to install etcd.

First clone the playbooks:

git clone https://github.com/storageos/deploy.git

cd k8s/deploy-storageos/etcd-helpers/etcd-ansible-systemd

Edit the file hosts put the external ssh hosts and in the ip put the internal VPN ips. (if you follow my other post about k8s cluster in contabo we setup a private VPN)

The host should be something like

[nodes]

host1.srv.yourdomain.com ansible_user=root ip="10.8.0.1" fqdn="10.8.0.1"

host2.srv.yourdomain.com ansible_user=root ip="10.8.0.2" fqdn="10.8.0.2"

host3.srv.yourdomain.com ansible_user=root ip="10.8.0.3" fqdn="10.8.0.3"

If you client machine is on the same VPN as the servers you could use their private ips as the host

Now edit the installation configuration in group_vars/all

We need to change etcd_port_client and etcd_port_peers to something that do not colide with the etcd running on the masters for kubernetes, my is 2381 and 2382 respectively

Also change advertise_format to ip, and disable the tls tls: enabled: false. We are not using tls because it complicates a little bit the deployment of storage because you will need the certificates, and we are using an encrypted VPN anyway

Now install etcd

ansible-playbook -i hosts install.yaml

Installing OnDat

Install ondat cluster operator

kubectl create -f https://github.com/storageos/cluster-operator/releases/download/v2.4.4/storageos-operator.yaml

Create ta secret for the passwords

apiVersion: v1

kind: Secret

metadata:

name: "storageos-api"

namespace: "storageos-operator"

labels:

app: "storageos"

type: "kubernetes.io/storageos"

data:

# echo -n '<secret>' | base64

apiUsername: c3RvcmFnZW9z

apiPassword: c3RvcmFnZW9z

# CSI Credentials

csiProvisionUsername: c3RvcmFnZW9z

csiProvisionPassword: c3RvcmFnZW9z

csiControllerPublishUsername: c3RvcmFnZW9z

csiControllerPublishPassword: c3RvcmFnZW9z

csiNodePublishUsername: c3RvcmFnZW9z

csiNodePublishPassword: c3RvcmFnZW9z

csiControllerExpandUsername: c3RvcmFnZW9z

csiControllerExpandPassword: c3RvcmFnZW9z

Change the username and password with something you generate with the command:

echo -n 'user' | base64

echo -n 'secret' | base64

Now create a svc pointing to your external etcd cluster

kubectl create namespace storageos-etcd

Apply the yamls:

apiVersion: v1

kind: Endpoints

metadata:

name: storageos-etcd

namespace: storageos-etcd

labels:

app: etcd

cluster: storageos

subsets:

- addresses:

- ip: 10.1.10.216

- ip: 10.1.10.217

- ip: 10.1.10.218

ports:

- name: client

port: 2381

protocol: TCP

apiVersion: v1

kind: Service

metadata:

name: storageos-etcd

namespace: storageos-etcd

labels:

app: etcd

cluster: storageos

spec:

clusterIP: None

ports:

- name: client

port: 2381

targetPort: 2381

selector: null

Label all the nodes you want be part of the cluster:

kubectl label nodes node1 ondat/storage=true # repeat for all nodes

Now create the cluster apply the follow yaml:

apiVersion: "storageos.com/v1"

kind: StorageOSCluster

metadata:

name: "ondat"

namespace: "storageos-operator"

spec:

# Ondat Pods are in kube-system by default

secretRefName: "storageos-api" # Reference from the Secret created in the previous step

secretRefNamespace: "storageos-operator" # Namespace of the Secret

k8sDistro: "upstream"

images:

nodeContainer: "storageos/node:v2.4.4" # Ondat version

kvBackend:

address: 'storageos-etcd.storageos-etcd:2381' # Example address, change for your etcd endpoint

# address: '10.42.15.23:2379,10.42.12.22:2379,10.42.13.16:2379' # You can set ETCD server ips

resources:

requests:

memory: "512Mi"

cpu: 1

nodeSelectorTerms:

- matchExpressions:

- key: "ondat/storage"

operator: In

values:

- "true"

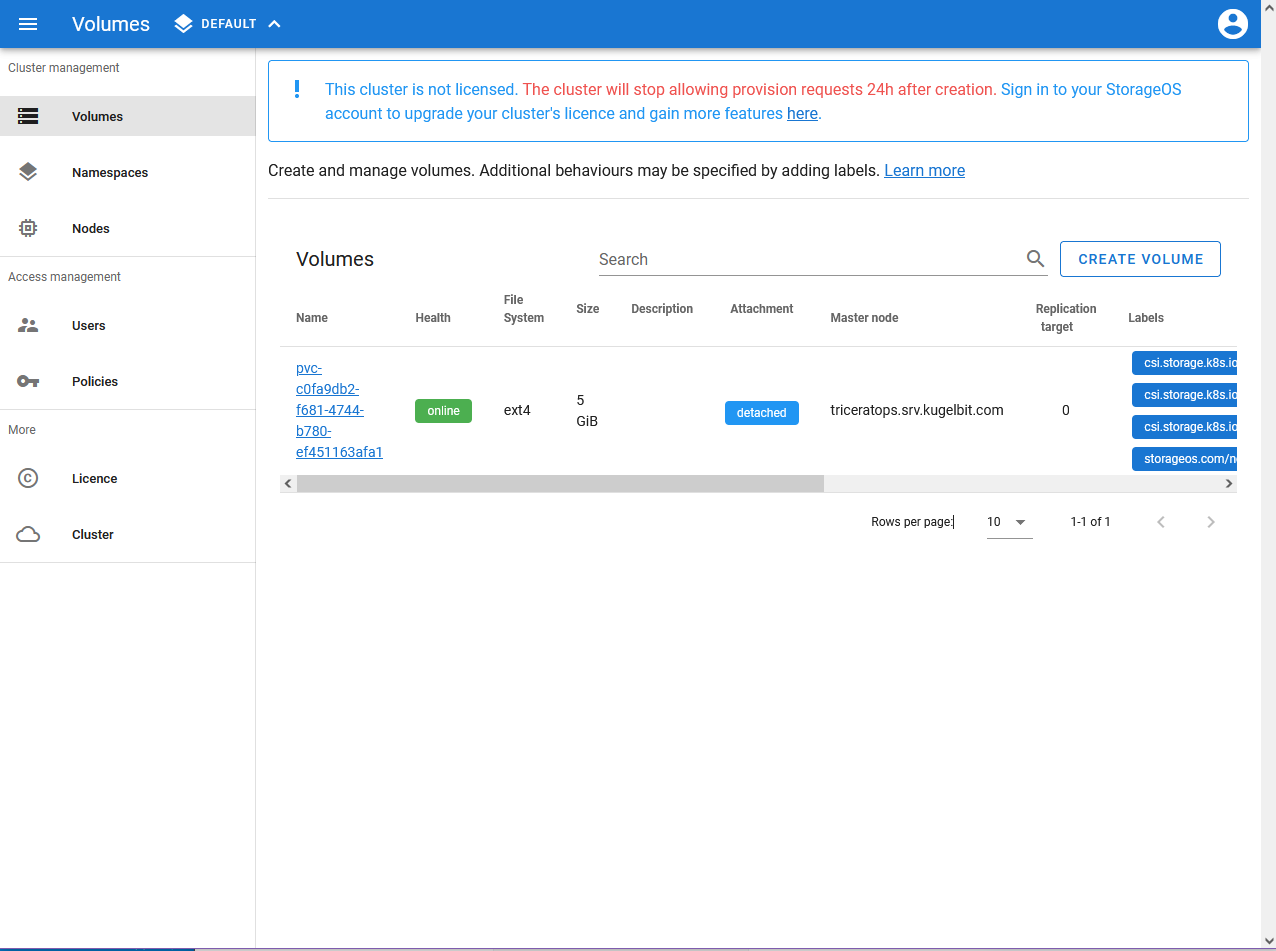

Check your cluster health:

kubectl -n kube-system get pods

The pods storageos-daemonset most be running

Lets login in the management ui

kubectl port-forward -n kube-system svc/storageos 5705

Browser to http://localhost:5705

Login with the username and password you choose on the secret.

Lets test the cluster:

git clone https://github.com/storageos/use-cases.git

cd use-cases/00-basics

kubectl apply -f pvc-basic.yaml

kubectl apply -f pod.yaml

kubectl apply -f pod.yaml

kubectl exec -it d1 -- bash

echo 'hello' > /mnt/helloworld.txt

exit

kubeclt delete pod d1 # kill the pod

kubectl apply -f pod.yml

kubectl exec -it d1 -- bash

cat /mnt/helloworld.txt # content are preserved

You should see the volume in ondat ui:

Now you need to register your cluster, follow the steps in https://docs.ondat.io/docs/operations/licensing/ to do it.

Your cluster is up! You can create new storage classes with different replication factors or encrypted volumes as well.