Installing a cheap and good kubernetes HA cluster

For this we will use rancher rke2 available in https://docs.rke2.io

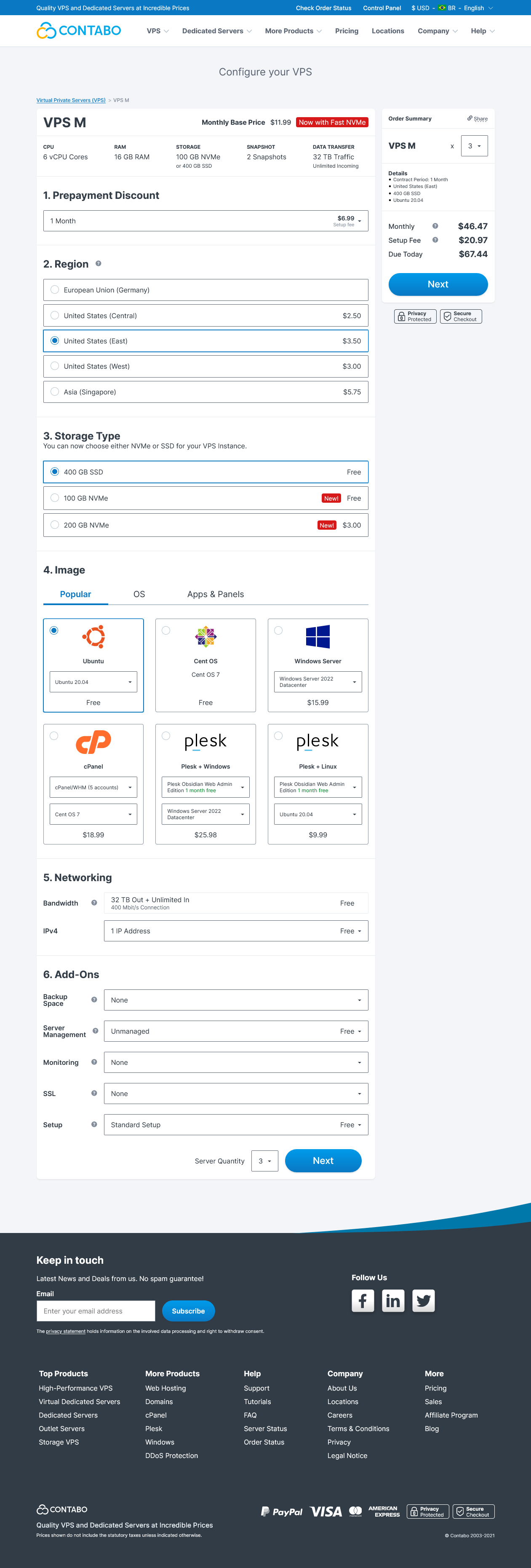

Price and performance the contabo VPS are unbeatable, we can run an HA cluster with plenty of memory for just $46

Setup the machines in contabo, we will use 3 contabo VPS M machines

After the provision of the machines change its hostname for something more usefull, follow this tutorial: https://linuxconfig.org/how-to-change-hostname-on-linux

Also change the default root password provided by contabo

Create a wireguard VPN

Unfortunately contabo doesn't offer private network, so we will encrypt one.

On each VPS Install wireguard-tools

apt install wireguard

Configure wireguard. On each server, you can read a good tutorial here we will customize the configurations.

First generate a private key and public key on each VPS:

wg genkey | tee /etc/wireguard/private.key

cat /etc/wireguard/private.key | wg pubkey | tee /etc/wireguard/public.key

Setup the wireguard wg0.conf in /etc/wireguard/wg0.conf

[Interface]

Address = 10.8.0.1/22

SaveConfig = false

ListenPort = 51820

PrivateKey = this-server-private-key

[Peer]

PublicKey = second-server-pubkey

Endpoint = second-server-ip-or-dns:51820

AllowedIPs = 10.8.0.2

[Peer]

PublicKey = third-server-pubkey

Endpoint = third-server-ip-or-dns:51820

AllowedIPs = 10.8.0.3

[Peer]

PublicKey = windows-pubkey

AllowedIPs = 10.8.3.1

Repeat the process for each server, excluding its own ip in the peer and adding its private-key in the interfaces, also alocation diferent ips. This ips must match the AllowedIPs directive

Choose a different CIRD for the ip address if you wish

Also install wireguard for your client machine if you wish to join the private network from home: https://www.wireguard.com/install/

Note: There is a awesome project with automates all this and keeps updated called Netmaker (https://github.com/gravitl/netmaker) which required you to setup another vm with a public ip and provide nice ui.

Start and enable the wireguard service:

systemctl enable wg-quick@wg0.service

systemctl start wg-quick@wg0.service

Check if you can ping all servers from any point in the mesh

Install Kubernetes with RKE2

Create a config file for the first master:

token: xxxxx

tls-san:

- k8s.yourdomain.com

- k8s.internal.yourdomain.com

- server1.yourdomain.com

- server2.yourdomain.com

- server3.yourdomain.com

- 10.8.0.1

- 10.8.0.2

- 10.8.0.3

disable:

- rke2-ingress-nginx

advertise-address: 10.8.0.1

node-ip: 10.8.0.1

node-external-ip: xx.xx.xx.xx

Alter the domains for your desired DNS domains. The tls-san directive tell rke how to generate the certificate for the master api server.

I create a DNS pointing to the ips 10.8.0.1 which servers the api internal in the VPN. After the installation this DNS can point to all 3 ips and you have a simple loadbalancing between then to register new workes/hosts.

I also disable the rke2-ingress-nginx controller because I plan to use traefik instead. If you want a quick ingress leave its enabled, by removing it from the disable directive

Create the directory /etc/rancher/rke2 and copy the file to it

mkdir -p /etc/rancher/rke2

cp config.yaml /etc/rancher/rke2/config.yaml

Create another file to configure cannal network to use wireguard interface:

apiVersion: helm.cattle.io/v1

kind: HelmChartConfig

metadata:

name: rke2-canal

namespace: kube-system

spec:

valuesContent: |-

flannel:

iface: "wg0"

Create the directory /var/lib/rancher/rke2/server/manifests and copy the file to it.

mkdir -p /var/lib/rancher/rke2/server/manifests

cp configure-canal.yaml /var/lib/rancher/rke2/server/manifests

Configure firewall in all nodes. (The rules are the same)

ufw limit ssh # good for atacks

ufw allow 51820/udp # wireguard

ufw allow http

ufw allow https

ufw allow --from 10.8.0.0/22

ufw allow 6443

ufw enable

Install rancher on first master node:

curl -sfL https://get.rke2.io | sh -

systemctl enable rke2-server.service

systemctl start rke2-server.service

Wait for the cluster to be on.

Lets see if the master is on and ready.

export CRI_CONFIG_FILE=/var/lib/rancher/rke2/agent/etc/crictl.yaml KUBECONFIG=/etc/rancher/rke2/rke2.yaml PATH=$PATH:/var/lib/rancher/rke2/bin

kubectl get nodes

Install rancher on second master node

First create the config file, its is almost the same as the previous one:

server: https://k8s.internal.youdomain.com:9345

token: xxxxx

tls-san:

- k8s.yourdomain.com

- k8s.internal.yourdomain.com

- server1.yourdomain.com

- server2.yourdomain.com

- server3.yourdomain.com

- 10.8.0.1

- 10.8.0.2

- 10.8.0.3

disable:

- rke2-ingress-nginx

advertise-address: 10.8.0.2

node-ip: 10.8.0.2

node-external-ip: xx.xx.xx.xx

Note that it now has a server config, with point to your internal dns with points to the first ip addres 10.8.0.1 of your first master node.

Create the config directory and copy that file:

mkdir -p /etc/rancher/rke2

cp config.yaml /etc/rancher/rke2/config.yaml

Install the secondary master:

curl -sfL https://get.rke2.io | sh -

systemctl enable rke2-server.service

systemctl start rke2-server.service

Wait for the service to go on and see its status on master

kubectl get nodes

You can also test with the secondary master if it can run kubectl commands

export CRI_CONFIG_FILE=/var/lib/rancher/rke2/agent/etc/crictl.yaml KUBECONFIG=/etc/rancher/rke2/rke2.yaml PATH=$PATH:/var/lib/rancher/rke2/bin

kubectl get nodes

kubectl -n kube-system get pods

Repeat the process for the final node

We now have a High Available 3 master, control-panel, and workers setup.

When you finished update your dns to point to all 3 masters in the internal network, or create a load balancer between all port 6443 for the api server

You have other options like, setting up a dedicated master and create workers for your setup. Please check the https://docs.rke2.io/install/ha/ for instructions

Next steps we will deploy traefik ingress and point a cloudflare loadbalancer in front of it.